Video visualisation in the browser

I am at the Movement Computing Conference (MOCO) in Montpellier and have been discussing motion capture and analysis all day. Someone asked about my work on video visualisation, and this reminded me about a long wish for creating a browser-based visualiser. I have been developing video visualisation tools for more than two decades. In the beginning, I did it with Max/MSP/Jitter, then moved on to Matlab, and later Python. Until recently, web technologies were not advanced enough, and hardware was not powerful enough to do anything in the browser in real time. And, I didn’t have the programming skills to make it work. Now, with the help of CoPilot, I have finally made it happen: VideoViz is here! ...

Retrieving data from an ORCID profile

After concluding that it is not viable to use institutional person pages to build a “Who’s Who” directory for MishMash, I yesterday found that NVA can be a good solution. However, it would only cover affiliated (Norwegian) researchers, which may be too restrictive for MishMash, where we also want to list non-academic, non-affiliated, and international researchers. Then, ORCID may be a better solution. This is an international registry where researchers can register themselves (check my ORCID profile). However, what information is available there and how can it be retrieved? ...

Retrieving data from NVA

I have seen that it is possible to build a complete CV from NVA data, the Norwegian research registry. As part of my quest to collect data of researchers connected to MishMash, I am looking for the best data source(s). Starting with a quick check of my own personal page at UiO, showed that institutional person pages are not the right solution. But what about NVA? Perhaps that is a viable solution? ...

Building a 'Who's Who' directory from institutional data

The Open Graph standard has helped “automagically” collect information about partner events on MishMash.no. Now, we have started building a “who’s who” directory, and I have begun looking into how we can pre-populate pages from existing academic identity sources rather than asking everyone to fill out web forms. My first inclination was to look at what is available on institutional websites. Most researchers have at least one institutional personal website. In this blog post, I look at what can be retrieved from my UiO page. ...

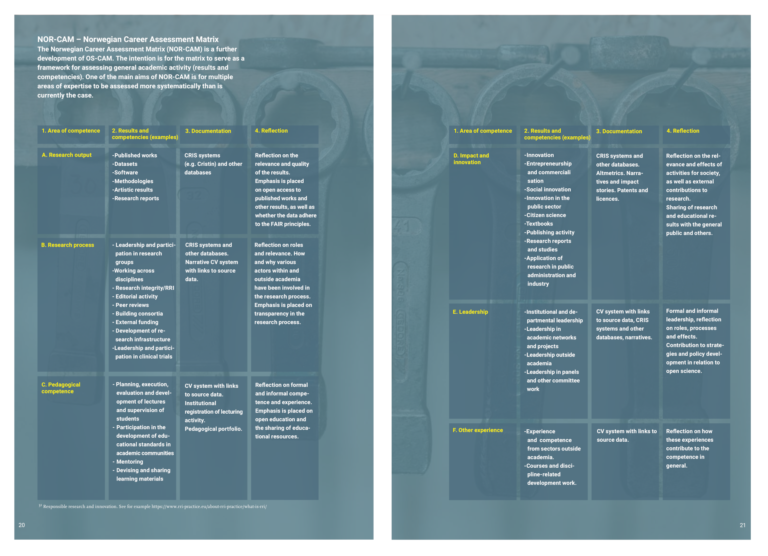

Building a NOR-CAM CV for Alexander Refsum Jensenius

I have previously explored how NotebookLM can generate ERC-style CVs based on publicly available research information. Now, I was curious to see whether it could also make a complete CV following the guidelines of the Norwegian Career Assessment Matrix (NOR-CAM). The NOR-CAM principles Creating a CV that follows the NOR-CAM principles is less about listing dates and more about telling the story of an academic career through a balance of quantitative data and qualitative reflection. If you are interested in learning more about NOR-CAM, have a look at the full report. Here is a 4-minute video introduction to the system: ...

Understanding the Open Graph Standard

We are currently working on developing the MishMash webpages. Since I don’t like adding and editing the same information in multiple places, I have been interested in whether the MishMash pages can (as far as possible) “automagically” collect information from other locations. When setting up our partner events pages, I realised that it was possible to collect most of the useful information (title, location, date, image) from the metadata of the pages already hosted on other websites. This makes it much easier to add partner events than if we had to set up our own pages on MishMash.no, which would also duplicate much content. The standard making this happen is called Open Graph. Since I didn’t know about this before I began my little adventure, here is a short overview. ...

Testing GitHub Agents for cleaning up the Musical Gestures Toolbox

Over the last few days, we have run a toolbox hackathon between the three music-related research centres of excellence in the Nordic countries (MIB, MMBB, and RITMO). Researchers across all centres are actively developing various toolboxes, including myself and the Musical Gestures Toolbox (MGT). Due to all the MishMash things happening lately, I was unable to spend much time myself on hacking. Instead, I thought of the opportunity to test how GitHub Agents could assist. ...

MishMash formally opened

MishMash was formally opened yesterday with a full-day program featuring a hackathon, research application workshop, work package meetings, industry panel, match-making session, and, finally, the grand opening ceremony in the Aula of the University of Oslo. Research application workshop The day began with a research application workshop held by Thomas de Ridder (UiB) with good support from Siv Haugan and Anette Askedal from the Research Council of Norway (RCN). They gave an overview of different funding opportunities from both RCN and the EU and gave tips on how to write a competitive grant. ...

No bullets, please!

This post has a simple mission: stop making template-based “title + bullet point”-style slides for your presentation. Yes, most presentation software suggests this as a default, but that doesn’t make it any better. Instead, think about your slides as complementary to what you are saying. Auditory–visual perception Human perception is inherently multimodal, meaning that we continuously experience the world with all our senses. In a presentation, that means the audience is processing your voice, your slides, your gestures, and the room itself as part of one combined experience. ...

Building a local LLM-driven chat for my web page

I wanted to test whether I could add a local chatbot to arj.no/chat/ that answers questions about my website content and not the whole internet. Since my blog is built using Hugo, a static site generator, I don’t have any server in the background that can host the Large Language Model (LLM). Instead I looked for a simple solution with standard web technologies and files. Running a model in the browser with WebLLM After discussing with CoPilot, I ended up using WebLLM, which allows language models to run inside the browser. It relies on WebGPU, which makes local AI processing possible, if you have Edge or Chrome available. ...